Last Quarter: The maximum entropy scam

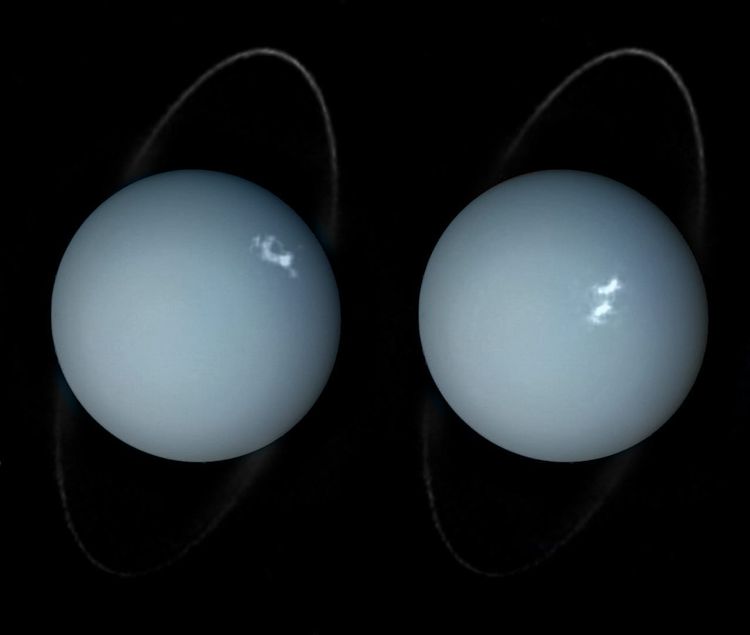

Hello. It's Friday, and at this latitude Orion has begun setting before midnight, and come nightfall I expect to follow the live feed of four human beings riding an artificial meteor at 23,000 miles per hour, their homecoming from our species' long-delayed return visit to the Moon.

It may surprise some of you to know that I'm generally interested in (and awed by) space exploration activities; for indeed, I harbor many qualms with the way that this exploration is conducted and why it's happening. But while the carbon contributions of rocket fuel, the ethics of the government and corporate figures involved, the nationalist rhetoric, and the colonialist mentality of settling or mining other celestial bodies all do not sit well with me, I am nothing if not a believer in the value of exploring the cosmos simply to understand its mysteries, not even to pretend we can quantify some rational, clockwork universe but rather to recognize just how much is sublime, spiritual, and vastly older than our small selves. Most astronauts and especially most who have ever gone as far as the Moon return to Earth with what seems like a deepened sense of humility — and investment in our planet's welfare. And despite some fellow leftists' concern that the money spent on NASA that could better be spent on terrestrial needs, I find this opinion misinformed because these days NASA's annual budget is less than 1% of US government spending, and it has never been more than 5%, meaning that many valuable state services have always been receiving much more money than NASA and the real culprit for their robbery is, as always, the fucking military.

Of course while I admire what a national space program has sometimes accomplished, I would still prefer for it to be approached in a much more sustainable manner, and I would also prefer to see a truly internationally coordinated program. And given that I am none too keen on states in the first place, when I imagine an alternate history of a prevailingly eco-anarchist Earth I must then wonder how and why any spaceflight technology would have ever been invented. I think it would still have happened, because it's the tendency of not just our species but all life to wander and spread into whatever cracks are available; but maybe we would have waited until we had some cleaner form of engineering to propel ourselves beyond our planet's orbit, instead of relying on the machinery of war. And perhaps in order to spend the right amount of energy in such a project, that need would already have been balanced with building a society that usually didn't require much energy at all.

In my most tech-skeptical and degrowth-oriented moods, I do even have to question whether the amount of energy for us to ever visit anywhere beyond Earth is impossible to maintain alongside a sustainable future. This troubles me in the same manner as looking at certain technologies that absolutely should not have been invented — such as nuclear weapons and petroleum-driven combustion engines — and wondering whether it was inevitable that they would arise because of the technology that preceded them. Where are we supposed to draw the lines between technology that is intrinsically helpful, technology whose value depends purely on how it's used, and technology that intrinsically will do more harm than good? How do we know which form of technology will lead to another?

I'm going to probe these questions today with an analysis not of spaceflight tech, but something I know much more about even as a non-programmer: large language models, the software being hyped as "artificial intelligence" but which I prefer to think of as "the maximum entropy scam." I oppose LLMs in most respects, at least as they are currently deployed, marketed, and used. I do not think they are going to take over the world in any way that either their proponents or the so-called doomers anticipate; however, LLMs' proliferation has caused and is going to consider ongoing ecological and societal damage before one way or another they're all burnt out. And what sometimes distresses me about this is the simultaneous sense that this could have been prevented and that knowing the exact point in history at which we could have prevented it may have been anywhere from decades to millennia prior. Again, where must the line be drawn?

Background thinking

I have meditated here before on anti-technological and/or anti-civilizational thinking, encapsulated most thoroughly in "Progress does not exist" but also sprinkled throughout many other writings. It might be safe to say that I'm not anti-tech because it's in our very nature to make and use tools, which is all tech is, plus I like and use some pretty modern tech — but I strongly oppose pursuing technological innovation just for its own sake (let alone for a profit) and I do not believe that just because we live in an era of more "advanced" tech it's automatically the best time for any human anywhere to have been alive. Likewise, I'm not anti-civ because I think how to even define civilization is in the eye of the beholder — but I would definitely say I harbor severe pessimism about the longevity of what many modern "Westerners" think of as "our" civilization.

But as I find more and more of my peers and the next generations vehemently rebelling against certain technology in particular and being certain that this civilization is going to collapse, I do not want to mimic some people's responses of semi-ironically posting snippets from Kaczynski's manifesto, nor do I know whether I'm on the brink of throwing out my smartphone and retreating into the woods as a hermit. Because I understand that (neo)liberal concept of progress is not real, I embrace tech that I recognize as helpful and reject tech that I recognize as harmful, with no regard for what period in history it "belongs" to and no attachment to whether I should be living a modern lifestyle, or for that matter whether I should be living in a completely non-modern lifestyle.[1] But opposing the frequently negative outcomes of industrialization should involve real action instead of dwelling on memes about a noted anti-leftist; and because the lines between helpful and harmful tech are so complicated to draw, I often struggle with deciding which tech I should personally embrace versus reject, And if I should reject something but the cost of doing so is significant, what is the cost threshold where I should still use that tech to survive, versus the moral threshold where I cannot possibly justify using the tech at all?

This dilemma runs parallel and even fully interweaves with the meme of "no ethical consumption under capitalism," which refers to how just about any product you can buy in this economic system carries some terrible baggage in some part of its supply chain, whether in terms of exploiting the environment, exploiting workers, the company investing in horrible causes, or all of the above. Even if somebody tries to divest from mass-produced items and buy raw materials to make their own things from scratch, the odds of those materials arriving in their home totally "clean" are not very high. Essentially, being tapped in to the consumer market at all means relying on the choices of people who own the means of production, who almost certainly have less scruples than you. So since not even a fully off-grid commune or homestead can supply everything it needs from the local landscape — indeed, trade of some kind has always enhanced human communities, going very far back — "no ethical consumption under capitalism" pushes every one of us to consider that instead of following extremely strict rules about what's good to consume, we could better expend that energy on dismantling the capitalist structures that create the problem in the first place.

But of course, even though a real degree of moral relativism exists in this regard, my chief complaint about "no ethical consumption under capitalism" is that I find it typically waved about in a nihilistic or at least blasé manner by people who want to excuse a lazy investment in products and services that by any objective measure do have more-ethical alternatives that are financially feasible for those people. It's a kind of inverse "perfect being the enemy of the good," wherein because perfection is impossible somebody doesn't even try to do what they can, paralyzed by the status quo. 95% of the time[2], a family at even my household's hamstrung income level can afford to buy anything — anything — somewhere other than Amazon. They just need to know where to look, whether offline or online, and a lot of people have simply been trained out of doing so, and they are spoiled on the perceived immediacy of their orders. The majority of leftist or leftist-minded people I know still regularly buy from Amazon because "no ethical consumption under capitalism."

Be that as it may, we could try to act ethically, sometimes.

And the same can be said of the technological-civilizational grey zone we all find ourselves in. Some lines can be clearly drawn even if most of the lines cannot be so clear. LLMs are a key example I would provide for this: to whatever extent they could ever produce a societal benefit at all, we do not live in the kind of society where that is the case, and certain costs associated with their existence and usage (under current circumstances and for the foreseeable future) are simply indefensible. So in this next section, I will enumerate the many, many reasons why I have yet to interact with any LLM and intend to keep it that way for as long as humanly possible. From there, I then do still have to explore where, if anywhere, we could or should have drawn a line before LLMs reached their "full" form or ongoing influence; but while there's so much ambiguity on that front, I want to plainly establish that some principles of avoidance around particular technologies can and should be maintained.

LLMs: if the monster had no senses or learning ability, said everything Frankenstein wanted to hear, and razed all of Switzerland in the process

The damage wrought by LLMs can be summarized in a list, but I'm going to expand on the first and last items in greater detail because of how they are the most important to understand and simultaneously the least well understood by people who overestimate LLMs' capabilities.

So, in list form first:

- LLMs do not think in any way that remotely approaches how a human or any animal or any living thing thinks, which means it's better not to assume they "think" at all. This should be extraordinarily simple to recognize even if we have divergent opinions on the hard problem of consciousness, and even if (as I will go into later) we could debate whether an LLM still has some kind of animacy. LLMs are not designed or constructed in ways that allow for them to perform cognition. They do not perform it. They are text generation programs that perform algorithmic calculations for what output they ought to provide in response to an input. Contrary to what their advocates claim and what doomers fear, this process hugely oversimplifies what neuroscientists, psychologists, philosophers, and other people who actually study brains and thinking know that cognition involves. It is completely inappropriate to deploy an LLM for any purpose wherein human cognition is critical, whether in the arts, sciences, or basic functioning in society. It is also completely irresponsible to expose human minds to sycophantic feedback loops in the kinds of one-way communications that happen with LLMs.

- Even for tasks that can fairly receive some mechanical automation, the capitalist urge to prioritize automation over human labor is a death spiral to which no ground should be ceded.

- LLMs have margins of error that should be unacceptable for most industries and are therefore not even useful for as many things as their advocates pretend.

- LLMs and related neural networks rely on heavily exploited human labor for their ground truth training and also frequently require an undisclosed human in the Global South to make real-time corrections in order to keep up the ruse of automation that isn't even fully happening.

- Anything so-called "creative" that's generated from an LLM is nakedly ugly garbage that not a single person I know actually enjoys unless they never had any taste in the arts to begin with and fundamentally lack the capacity to understand where creative instincts come from.

- LLMs are not at all energy efficient, with their training and operations inherently turning into a financial sinkhole. As documented by clear-eyed journalists like Ed Zitron[3], all the LLM startups and all the tech giants pouring money into LLMs are basically just moving money around between themselves, creating a stock market bubble that's going to demolish the US and global economies when it does burst. The only company really profiting is Nvidia, the manufacturers of the hardware that LLMs rely upon — hardware that depreciates at an extraordinary speed and that guarantees whenever "enough" data centers finally get built they will need everything inside them upgraded almost immediately, over and over again.

- In tandem with the financial consequences of poor energy efficiency, LLMs are the worst thing to yet happen for the ongoing eco crisis, exploding carbon footprints and draining already severely stressed water supplies. In capitalism's impossible quest for a laborless, perpetual money machine, the oligarchs really are prepared to render the world uninhabitable even for their own descendants. They are just that heedless. The logic of their minds has grown just that entropic.

Now I will go back to point 1. I treat it as point 1 because by understanding it we also can understand all the other points with minimal explanation and also sidestep common fallacies about those points. I also prioritize point 1 because in combination with point 7 these become the alpha and the omega of LLMs' actual — not hyped — dangers.

Let's unpack what I've said with point 1. On what basis do I claim that an LLM does not think or might as well be treated as not thinking? Well, first of all, I cannot speak with the authority of someone who builds these things myself, but as already indicated the people who build these things are generally trained in how computers work, not in how organic brains work. Even when exceptions apply to that rule, the AI hypers who lead these tech companies are definitely not trained in brains, cognition, or philosophy of mind, and they often aren't even software engineers, just aptly-termed business idiots who think they know enough about LLMs and computers to get other business idiots excited about the whole thing.

By contrast, I am no expert in my own fields of study or work, but I still spent four years studying undergraduate-level philosophy and linguistics, and although I'm leery of revealing too many details of my work history here, until relatively recently I spent eleven years engaging with neuroscientific research that incorporated machine learning, giving me a daily direct window into a) how unbelievably complex and poorly understood our brains still are, and b) the severe limitations and extreme processing needs of "AI" that's trained and operated in a similar manner as an LLM. I do not need to look at an LLM's source code directly to know the core principles behind how it works, and to be intimately acquainted with the kinds of malfunctions or bugs-as-features it will therefore have, no matter how many improvements are made.

And on a higher level, I find it transparently obvious why even if LLMs were improved over time instead of more likely suffering from model collapse, they just have less useful applications than other neural networks or broader varieties of deep learning models that are trained to generate or analyze numerically based scientific data. It is not out of the question that deep learning programs could — if the ecological tradeoff weren't too dire — help with otherwise too-gargantuan research projects that could better humanity and the world. I have seen it firsthand, even with the limitations that are built in — the areas in which human oversight still takes place. But LLMs are not the specific models to do this, and crucially, deep learning systems have core physical barriers to learning like an organic life form does. Some do not learn from anything except the data they are initially fed, and even for any that can "improve themselves" over time they cannot work from anything other than experiences that are fed to them. They cannot seek their own new experiences. They do not have sensory systems in order to digest the universe directly; they must purely rely on intermediary interpretation. They do not have bodies tht give them a sense of somatic being-ness. They do not grow like babies who are taught by humans who genuinely love them, and they do not experience life as life.

I do not want to lay emphasis on the sensory factor to the point of implying that people living their whole lives with fewer senses (e.g. congenitally blind or D/deaf people) or fewer limbs cannot think in the same way that other humans do. The key point is that all humans by definition have bodies. All living things with nervous systems contain those nervous systems within bodies. Our bodies react to and relate with the world in real-time, whereas LLMs and their relatives are the ones operating in what are essentially simulations, responding in discrete, digitally limited ways instead of in analogue.

Could human beings ever invent artificial intelligence that went beyond this? What about wedding a digital brain with a robotic body? What about the replicants of Blade Runner or similar media, where the AIs are even formed from organic matter and seem to be completely indistinguishable from humans apart from having implanted memories, super-endurance, and deliberately limited lifespans?

Well, perhaps. I do not know enough about all of those things to say they're fully impossible. But I do know that present technology is abysmally far from achieving any of these things, and that the threats to humanity posed by such tech are not really about what the tech can do, and much more about what people with too much power and too little caution will freely let the tech do. We don't need Skynet for a so-called AI to initiate global nuclear annihilation. We just need someone to trust a slightly dogshit automation too well.

Now, if somebody did manage to invent replicants, I would be very concerned because then they would have essentially become Victor Frankenstein, producing a creature who was truly so close to a human being on an ontological level that I would in fact argue they deserved to have their capabilities and rights recognized; like Frankenstein, this creator would have changed the natural order of the world so profoundly that the (spi)ritual consequences would be enormous; as for the creation, they would probably suffer immensely as the ultimate Other, alternately enslaved or exiled. To me this is enough to forbid ever pursuing such research and development. The monster would not be evil, but humanity would be so unready for it that great evil would result. However, by the same token, I would actually seek to befriend or at least engage with such a creature, accepting the new and strange reality rather than try to exterminate it.

But none of this has happened with LLMs. Quite the reverse. Instead, LLMs have been marketed relentlessly as human-like and intelligent, when they are nothing more than elaborate con jobs. Or if you prefer: Ouija boards. If you look up the history of very real psychotic violence caused by Ouija board usage, it bears an alarming resemblance to LLM-caused psychosis. In both cases, people receive messages that they perceive as coming from the medium they are interacting with, but that are really coming a much more solipsistic source; in the case of a Ouija board, the message comes from the operator, and in the case of an LLM, the message is generated from an enormous corpus of digitized text. In either case, the recipient winds up reading something that affirms a belief they already held on some level.[4] Over and over.

An LLM is perhaps a kind of magic in the way that any digital technology is; think of how every day we go about with magic mirrors that let us speak to each other across long distances, or magic tablets that let us write each other messages that appear in two places simultaneously. In this regard, I would at least allow that an LLM is animate. But it is further from actual life than even a virus is. It is the kind of magic that should not simply be released to an unsuspecting public with the encouragement for us to use it in everything we do.

For that reason alone, under any societal circumstance I would probably limit what I used LLMs for, and how often. I would likewise want to engage in significant psychological and spiritual preparation for using them, much like for using psychedelic drugs.

But now let me bring back point 7 from my list above: the ecological cost. These tools are not just relatively useless baubles that can rot minds with excess interaction. Because of the energy and water consumption that data centers require — and will always require for as long as LLMs remain so inefficient or for as long as oligarchs remain obsessed with economic growth at all costs — if anybody uses an LLM when they don't strictly need to, which is 99.9% of the time, then they are contributing to ecological damage when they don't need to. It's one thing to feel stuck using a technology like automobiles, which have existed for decades and got entrenched as the key transit method in various places before people fully understood what the consequences were. It's another thing to adopt an emergent technology that we already know causes damage and therefore could freely opt out of before it gets off the ground.

I know that focusing too much on individual choice over collective action is not how revolutions are accomplished, and I've addressed that at length here. Nonetheless, I believe very strongly that we need to not let the scam artists behind these particular inventions make us believe that they're so inevitable we have to give up and start adopting them. They are not inevitable, especially because they don't fucking work. Imagine not adopting automobiles in the early 20th century, but instead adopting a machine that causes the same amount of air pollution while carrying you somewhere at a speed slower than you could walk or wheel yourself. Why is anyone listening to people selling the same kind of nonsense right now?

For me, the choice not to use LLMs is very easy, and I feel adamant moral conviction about it. This is not necessarily the last stand against capitalism, but it is a keystone of that stand. I refuse to let actual humans and human labor be relegated en masse to "surplus population" worthy of climate-culling, all for a glorified party trick. I refuse to give tech giants any reason to invest — as they are now investing — in fossil fuels that they had supposedly given up, just to try filling their party trick's energy shortfall.

They are truly set on burning and drying up the planet for absolutely nothing but a return on their investment that will never even come.

I will never cede them an inch of ground.

Where did we go wrong?

But how did it come to this?

Yes, on a certain level I know that the cause is purely systemic: high-profile boss-class people are pushing LLMs because such technology is a natural thing to pursue if your profit motive insists that you automate, automate, automate. This whole affair began with industrialization and capitalism itself. And this does not dictate to me that I should regard any specific predecessor to LLMs as also being "part of the problem."

At the same time, many other technologies have been direct consequences of this economic system and method of production. These technologies can of course have benefits that LLMs do not; not every product of capitalism is an intrinsic scam. After all, modern electricity has allowed for some wonderful things, and when generated in the right ways it can supersede more-polluting technologies that are older.

But then again, even freely using that tech, it's always worth thinking about how much electricity we use, and what to do about the fact that it often still comes to us at an ecological cost. I have no easy answers here, but we need to at least be constantly asking the questions.

By the same token, many technologies that we take for granted today pre-date capitalism significantly but were not necessarily a net benefit for humanity when they got invented, either. I've already written so much here today that I shouldn't take a detour down the path of asking, "Was the invention of writing actually bad?" — but bear in mind that this is not an erroneous or trolling question at all. Although the written word provides plenty of advantages over an oral tradition, this is a two-way street, and when human minds began to be exposed to writing this may have produced far-reaching effects on our cognition, including the loss of certain skills. Look it up — or perhaps I'll write something here about it later. Either way, of course I'm writing here now, but I have to wonder whether the invention entrenched a focus on external recordkeeping that facilitated the invention of computers, which then facilitated the invention of LLMs.

Could or should we have interrupted that chain of events? At what point?

I do not have an easy answer here, either, but considering the consequences that have at least arisen from the internet's invention, and from digital tech as a whole, I know that I do not want to single out LLMs as if they aren't potentially fruit from a poison tree. If LLMs are uniquely dangerous, I want to be sure I hold that conclusion from thorough technological examination, and not just as an instinctive reaction to damage I've been able to actively watch and recognize for what it is.

So far, I have at least come around to the idea that computers and the internet have their benefits, but they were probably invented at the wrong time. Our society was not in a position to handle their impact and maximize the benefits. Since the benefits do still exist to some degree, this doesn't mean I am ever likely to wholly stop using computers or the internet. However, like I said above, we are playing with magic mirrors. I want to start treating them with even more caution than I already have been.

Especially because, as some of you know by now, as I approach the 17 week mark I am now expecting to finally bring a child into this world. What technology they are raised with and exposed to will depend a lot on what my owner and I think is fitting technology for a child, or anyone, to ever use.

[1] I know that my nose-wrinkling about modernity as an ideological status might still provoke the suspicion that I don't like living in a world with antibiotics. I promise that I like it, even though the upswelling of antibiotic-resistant bacteria might make the whole thing a moot point eventually, and even though taking antibiotics legitimately requires taking better care of our bodies' inborn microbiomes than most of us do. These things can be true and I can also still use modern medicine for an infection.

[2] I'd even say 100% of the time, but I'm allowing a 5% margin of error for any ultra-niche products I don't personally know about that have perhaps had their non-Amazon supply chains so thoroughly destroyed that now people who need those things have literally no choice. I will refuse to accept arguments, though, that there should also be some margin for people living in highly remote areas that "only" Amazon can reach quickly. Amazon's logistics network is massive, but it has still not yet surpassed postal services or private shipping companies like UPS, otherwise it would not need to rely on those people to cover the last few miles for really remote addresses.

[3] Whose acerbic manner of writing or speaking is often not my cup of tea but whose investigative work and analysis is top notch.

[4] Note that I am not against people using Ouija boards. I do not offer these observations to suggest that Ouija boards are completely useless divination tools, nor to suggest that the boards are wildly dangerous. I'm sure that for divination they can actually be helpful, and I think that their risks are relatively much lower than seeking therapy or spiritual insight from a chatbot. But understanding the real effects that the boards have had is pertinent to understanding what's involved in LLM psychosis. I also have not yet fucked with the Ouija board that very much appeared in my owner's possession under mysterious circumstances, because I am quite sure that the weight of what can be divined with it is probably comparable to that of a pendulum: brutal.

Thank you for reading. Next week, I'm going to have a post for Occult-tier subscribers, and then the week after I'll have another public post, this time reflecting on my progress at learning to knit.

Member discussion